The Basics of Optics: How to Compare Different Lenses and Sensors

Photographers often think a lot about numbers, like the ones for aperture, lens, and ISO, while knowing very little about what’s really behind their pictures. Here’s a common-sense look at the critical, yet often quite confusing, relationships among different sensors, lenses, and final pictures.

Let’s start by describing some basics about how light works.

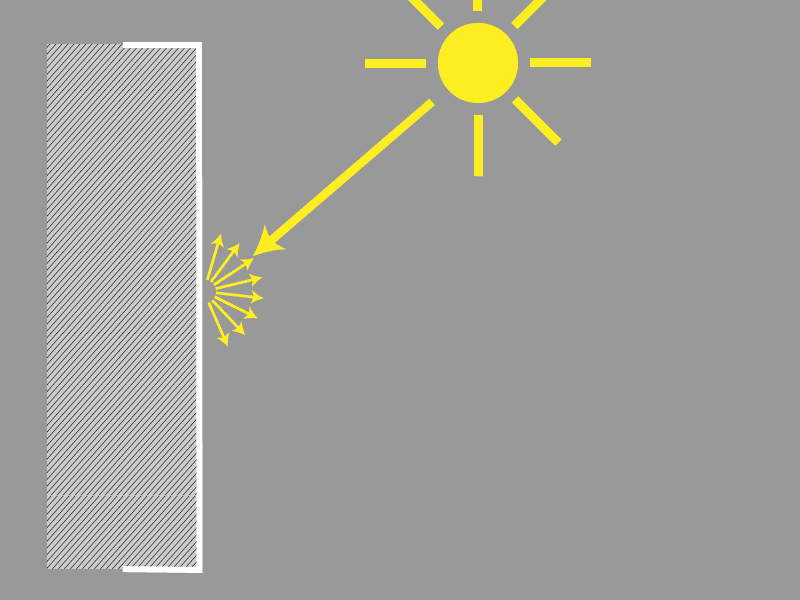

Light Is Reflected by Objects

Forget about lenses, lens speed, or resolution, and imagine you’re photographing an all-white wall. Light is falling onto the scene from the surroundings, and every point on the wall reflects incoming light equally in all directions. Thus every direction gets its own little packet of light energy.

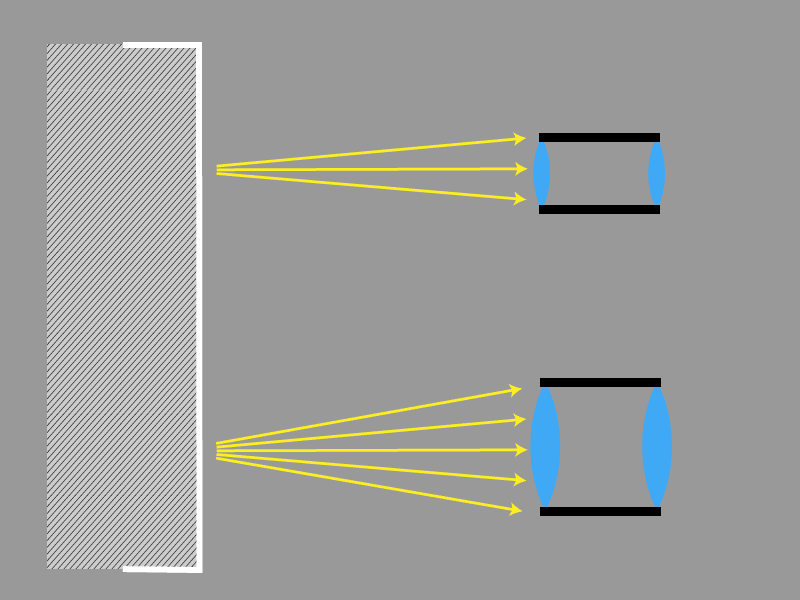

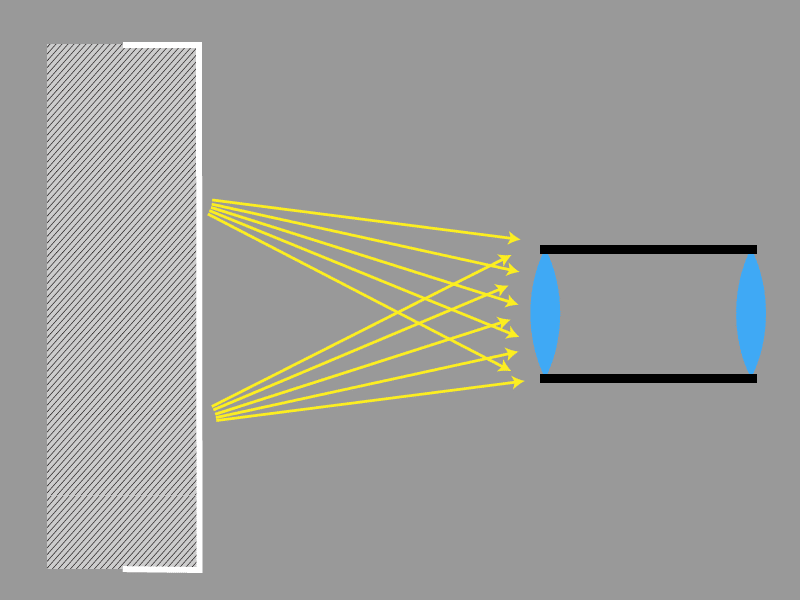

A lens is a simple device that can capture part of these rays and concentrate them onto a sensor. The size of the sensor plays no role at all in this process. The fact of its being large or small is a relatively small matter that can be handled with a change of focus. And after all, our experiment with a camera obscura proved that light can pass through even a small lens (a hole in a sheet) onto a giant sensor (a wall in a room).

A better lens assembly can also capture more distant rays and can focus them onto the sensor. Naturally, this also means that the individual lenses in it have to be larger and heavier.

However, a lens doesn’t just capture a single tiny point, nor an infinitely fleeting moment.

It captures a larger area, and the exposure takes time, and so overall, a certain number of photons hit the sensor, which we can describe in a simplified way as:

[photographed scene’s area] x [photon captured from a single point in the scene] x [time]

(if you’re allergic to formulas, don’t worry, this is the last one).

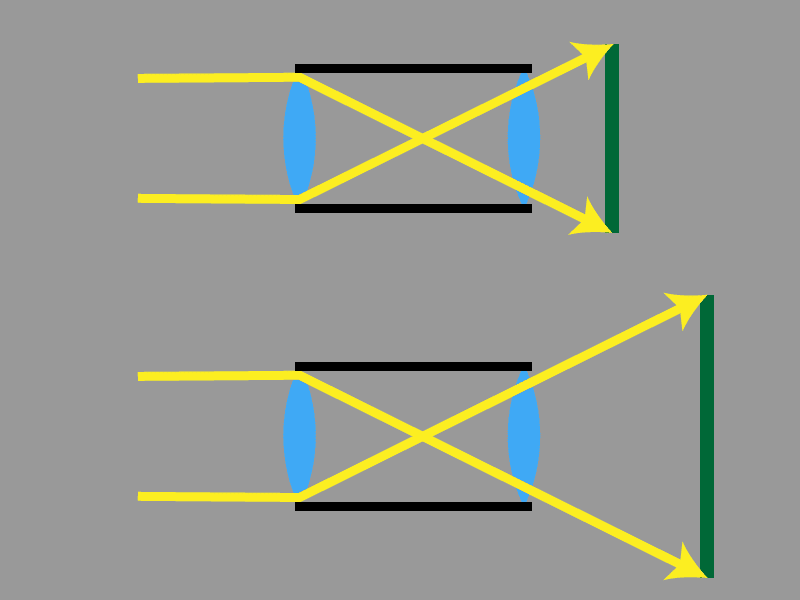

Now imagine that you’re changing your angle of view and you want to capture a much smaller part of the wall. It seems like something isn’t working right here. The photographed area is smaller, and so we should get a darker image.

And we did, but in practice, there’s something more that also happens here. When zooming in, the lens also uses a larger hole to capture light. So while it’s capturing a smaller area, it also captures more light rays from each point in that area. This happens “behind your back”; the lens handles this automatically. You can watch any zoom lens from in front while changing among focal lengths—you’ll see the aperture getting larger and smaller.

The Question of Lens Parameters

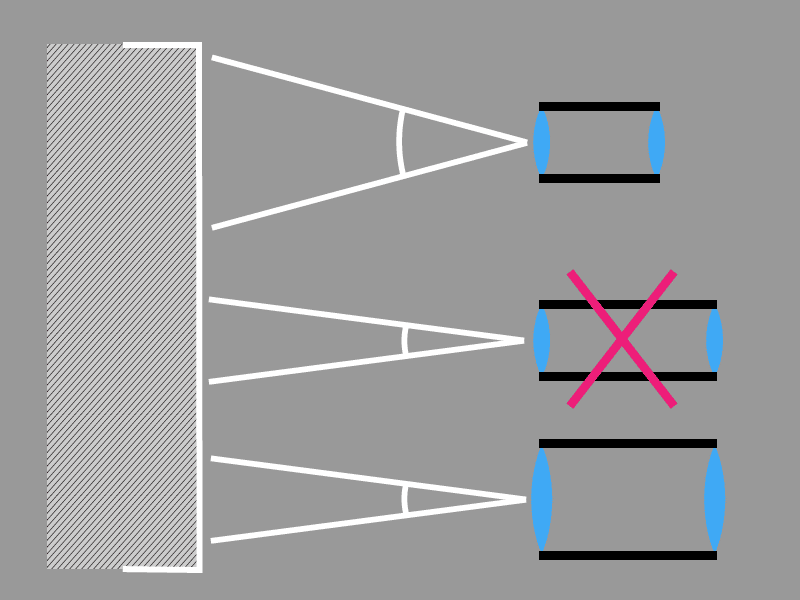

The problem when comparing different photographic systems is that there’s no easy way to determine the size of the lens aperture. Those numbers that we learn about lenses are only abstractions, which are defined based on sensor size. For example, the focal length isn’t the physical dimension of a lens, but a virtual number set up so that the shot angle from reality matches the following drawing.

It’s the same with the other parameters. All this leads to the following series, where every term is defined based on the one before it.

Sensor size← focal length ← lens speed/aperture ← ISO.

Sensor size is the only firmly fixed number; everything else is defined in relation to it.

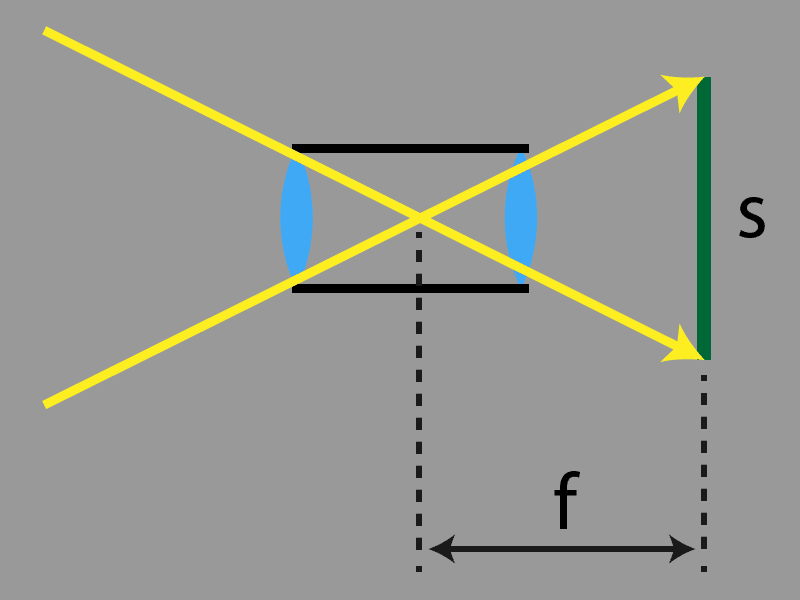

The focal length is set based on the drawing above.

The lens speed number tells us what to divide the focal length by to get the right aperture for light to flow through. This here is the source of the typical “f/4” etc. notation, which is actually a ready little mathematical formula.

The ISO number for the sensor’s sensitivity is defined based on the specific lens speed number (and other factors). So its value directly depends on the sensor size, among other things. ISO 100 means something different in practice for a Micro Four Thirds (crop 2x) than ISO 100 does for a Full Frame (crop 1x); the first ISO is four times as sensitive.

How to Compare Lenses

In order to compare lenses, you have to calculate things. It’s not enough to just compare the numbers provided by the manufacturers.

Most commonly, a conversion to Full Frame sensor size (e.g. Nikon FX type camera, Canon 5D type, etc.) is used. Other systems have what’s called a Crop Factor (Nikon presents their systems of this type as the DX line, for Canons, it’s the 800D etc.), which means that the given camera is smaller than Full Frame. Crop 2x means a diagonal that’s 2x smaller than Full Frame, i.e. one quarter the sensor surface.

We’ve already noted that all of these numbers were artificially defined based on sensor size, and because of this, you need to calculate not only the focal length, but also the lens speed/aperture, and in some cases the ISO as well. You thus have to multiply the given number by the Crop Factor. The ISO is always multiplied by the square of the Crop Factor. As a result, you get, for example, the focal length as converted to full frame.

A Few Sample Conversions

If you’d like to take identical photos on systems with different sensors, then because of the confusion described above, you’ll be using differently numbered focal lengths, apertures, and ISOs.

By identical photos, I mean the same shot angle, depth of field, and noise. The shots will be practically indistinguishable. We’re assuming here that we’re avoiding a blowout and that we’re using sensors with roughly the same degree of technological advancement, rather than one of them being e.g. 10 years older and thus significantly inferior.

I’m providing a few examples for illustration; they are all equivalent. Notice how for each example, the same exposure time was used; this is the only number that’s absolute, i.e. not relative to the sensor size.

Example 1:

Full frame (crop 1x): Focal length 100 mm, aperture f/4, ISO 400, time 1/100 s

Micro Four Thirds (crop 2x): Focal length 50 mm, aperture f/2, ISO 100, time 1/100 s

Example 2:

Full frame (crop 1x): Focal length 75 mm, focal length f/2.1, ISO 225, time 1/50 s

APS-C (crop 1.5x): Focal length 50 mm, aperture f/1.4, ISO 100, time 1/50 s

Example 3:

Full frame (crop 1x): 26 mm, f/9, ISO 1800, time 1/1000 s

Mobil (crop 6x): 4.3 mm, f/1.5, ISO 50, time 1/1000 s

A Frequent Problem in Specs and Reviews

Both manufacturers and reviewers like to present a lens’s speed for the original sensor size, e.g. f/2, which when converted means: Take the focal length f in millimeters and divide it by 2, and you’ll get the precise size of the aperture through which the lens “sucks in photons.”

You are not, however, told its original focal length f. Instead of that, you are (if you’re lucky) told its focal length as converted for full frame. However, you can’t divide the new focal length by two again; that would mean that the hole amid the lens is suddenly larger. So the original lens speed is worthless for us. It would have to be converted too, but that has gotten “overlooked.”

Where the Even Bigger Problem Is

The troubles above have always been with us, but with the arrival of phones with multiple focal lengths, things have gotten even more complicated. Every focal length has its own sensor with a different size.

This leads to the strange situation where not only are phone-camera parameters hard to compare to those of other cameras, but it’s also very hard to compare the individual tiny cameras inside a single phone among each other.

It’s common for a single review to state that a given phone has e.g. three cameras with lens speeds of f/1.5, f/1.9, and f/2.4, but these numbers are not easy to compare. You need to know the other parameters and have a crop-factor table and a calculator at hand, or else they’re worthless.

Does Sensor Size Itself Matter at All?

Up to here, it’s seemed like sensor size only matters for the other numbers derived from it, but in fact there actually is a practical difference between small and large sensors.

The better performance of full frame cameras is due to their much larger lenses, which are able to capture a lot of photons from a single point in a scene. Paradoxically, a large sensor is only better in situations where the amount of light is large.

Today’s sensor technology is limited in that it lets photons pile onto the sensor and then it stores them. Only after the exposure ends does it count how many photons were captured in different areas.

Sensors can hold a limited number of photons, and at present the larger the sensor, the larger that capacity. The moment it’s exceeded, the photons “hit the max” and aren’t counted anymore, and so an all-white area—blowout—arises in the given spot.

This means that a large sensor is more tolerant towards large amounts of light, and so with a large sensor, blowout only occurs after a long exposure time. Meanwhile, for a smaller sensor, the exposure has to end far earlier (it can even be a difference of 1/100 second vs. 1/1000 second).

A Mobile Breakthrough

Recent mobile photography technologies are able to take several pictures in quick succession and then add or average the collected data. This enables them to gather a lot of light data without a blowout, vastly increasing picture quality. This approach, with the merging of multiple photos, is at an advantage over a single long exposure. For example, if the camera is moving, you can later compare the shots and remove those that are too blurred, for example.

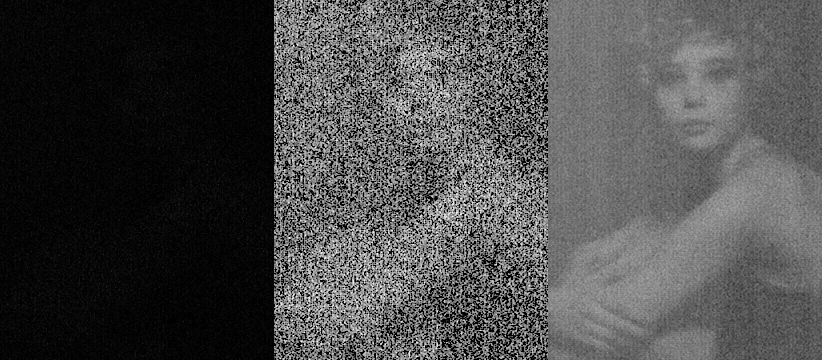

As an example, here’s a picture that I created manually with an LG G4 phone. I took one very dark photo fifty times and then averaged everything on a computer. The noise was drastically reduced, and the dynamic range rose enormously, precisely because the device was working with a far larger amount of light.

It seems that these approaches will also make their way into higher camera classes in the form of the Olympus E-M1X and its Live ND feature. As of this writing, this camera is still just a hot news item, and no detailed information or tests are available. I’ll be watching, and you can watch too.

Consequences

My personal opinion is that we’re approaching a situation where blowout will practically cease to exist. Cameras will start taking lots of pictures with exposures so short that there’s practically no delay among them. I’d estimate that we’ll reach this situation within about five years.

Thanks to this, the achievable dynamic range will be nearly infinite. You want an enormous dynamic range? Just lengthen the exposure, absorb enough photons, and even at sunny noontime, you can easily expose for a minute instead of 1/1000 s.

Large sensors will start to lose their meaning, but lenses will still continue being decisive to some extent (certain effects such as depth of field can, however, already be provided by processors).

We’ll have to get used to systems with small sensors no longer lagging so far behind the bigger cameras, although there will still be differences. The biggest difference is probably the fact that a large lens can capture an image quickly, while for action-packed scenes, phone cameras can’t capture enough light before someone/something moves.

Football, hockey, etc. are good examples here, but so are action shots of your friends at the bar. That will be a tough challenge for phones to overcome. But who knows what surprises phone makers have prepared for us in the future.

There are no comments yet.